Aphasia: the words go, but the mind doesn’t.

Aphasia is a language disorder, most often caused by stroke. It damages the left-side brain networks that produce or understand speech, but it does not touch intelligence. People with aphasia usually know exactly what they want to say. They just can’t reach the words.

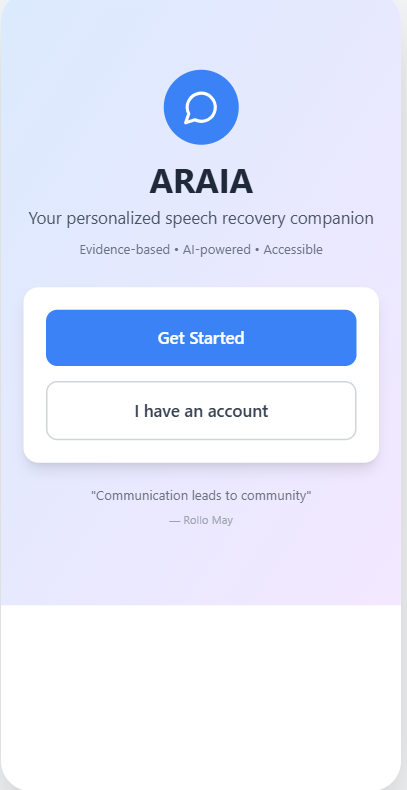

The standard treatment is in-person speech therapy: weekly clinic visits, a therapist’s schedule, transportation, and costs that go up with how severe the stroke was. Most patients get only a fraction of the practice their brains actually need to rewire. A.R.A.I.A. is meant to fill that gap: a phone-based version of the same therapy, available between sessions.

Melodic Intonation Therapy.

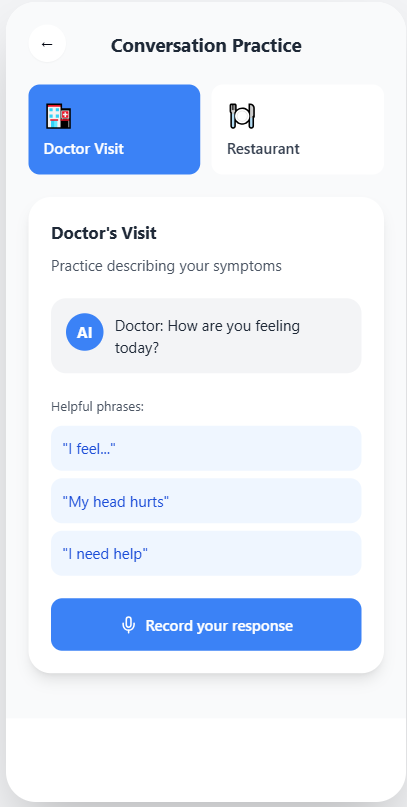

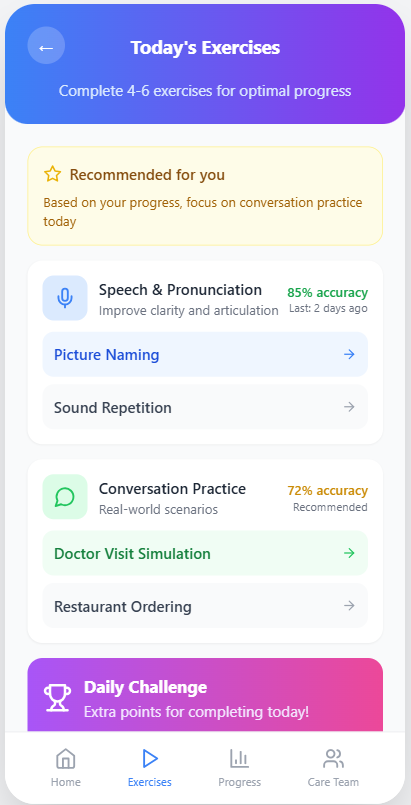

A.R.A.I.A. is built on Melodic Intonation Therapy (MIT): a real, clinically validated method that uses melody, rhythm, and tapping to recruit the right hemisphere’s music networks to do some of the work the damaged left hemisphere can no longer do. People who can’t speak the word “water” can sometimes sing it, because the pathways for melody often survive the stroke.

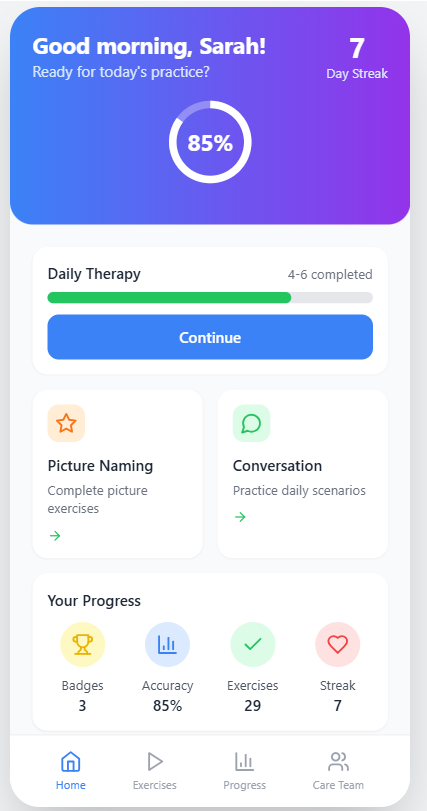

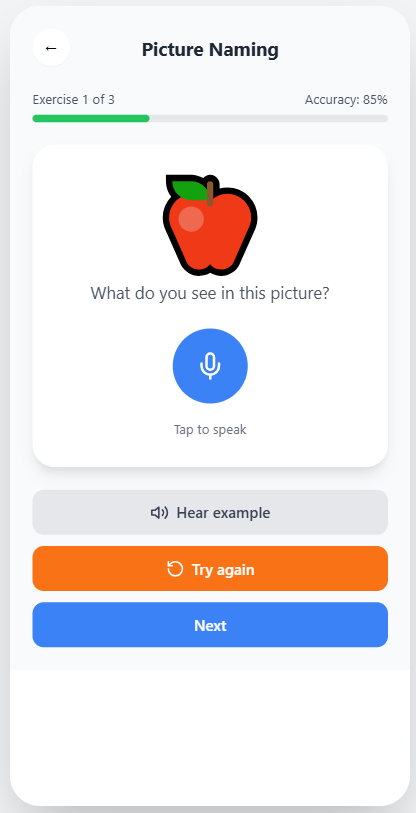

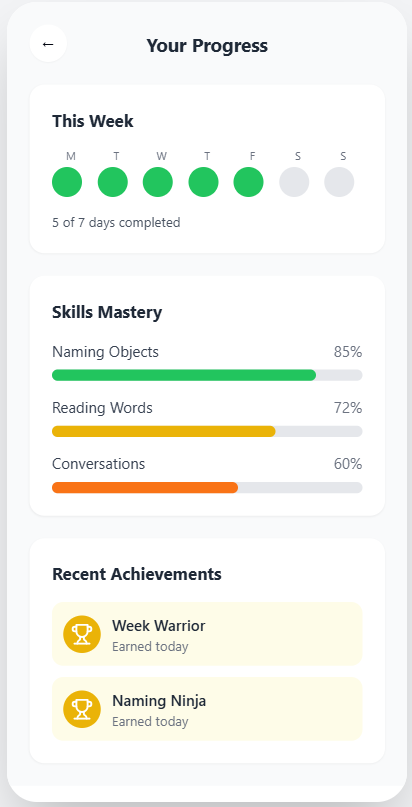

The app has five modules: paced repetition, picture-naming with melodic prompting, conversational practice with an AI partner, daily structured routines, and progress tracking calibrated against the outcome measures used in published MIT trials. Every clinical claim in the app traces back to a peer-reviewed paper that I or a teammate read and verified.

Designed for someone whose patience is already at its limit.

Big targets. Calm colors. One thing per screen. Voice input on equal footing with typing. Progress visible without nagging.

The capstone presentation.

Presented to Stanford CNI-X 2025 faculty, staff, and families in the final demo. The deck walks through the clinical problem, the MIT mechanism, the system architecture, and the demo.

A six-person capstone.

A.R.A.I.A. was built by a team of six CNI-X students. We split the work evenly across research, fact-checking the clinical claims, design, and the final presentation. I was the youngest in the cohort, the only rising freshman, and the rest of the team was older. Pulling my own weight meant being careful with the science: every claim I wrote on the slides could be traced back to a paper I had read and verified myself.

From prototype to clinic.

Right now A.R.A.I.A. is a polished concept and a working prototype. To actually put it in front of patients, the next steps would be:

- A small pilot with post-stroke aphasia patients, in partnership with a speech-language pathology clinic.

- IRB review and validating its outcome measures against published MIT trial benchmarks.

- Moving voice-fluency assessment onto the device itself (privacy-preserving) instead of sending audio to the cloud.

- A family / caregiver companion view, recovering from aphasia is a household project, not something the patient does alone.

If you work in stroke rehab, speech-language pathology, or BCI research and any of this is interesting to you, please reach out: oztekinperi@icloud.com.